ChatGPT is biased against nuclear...wtf is that about?

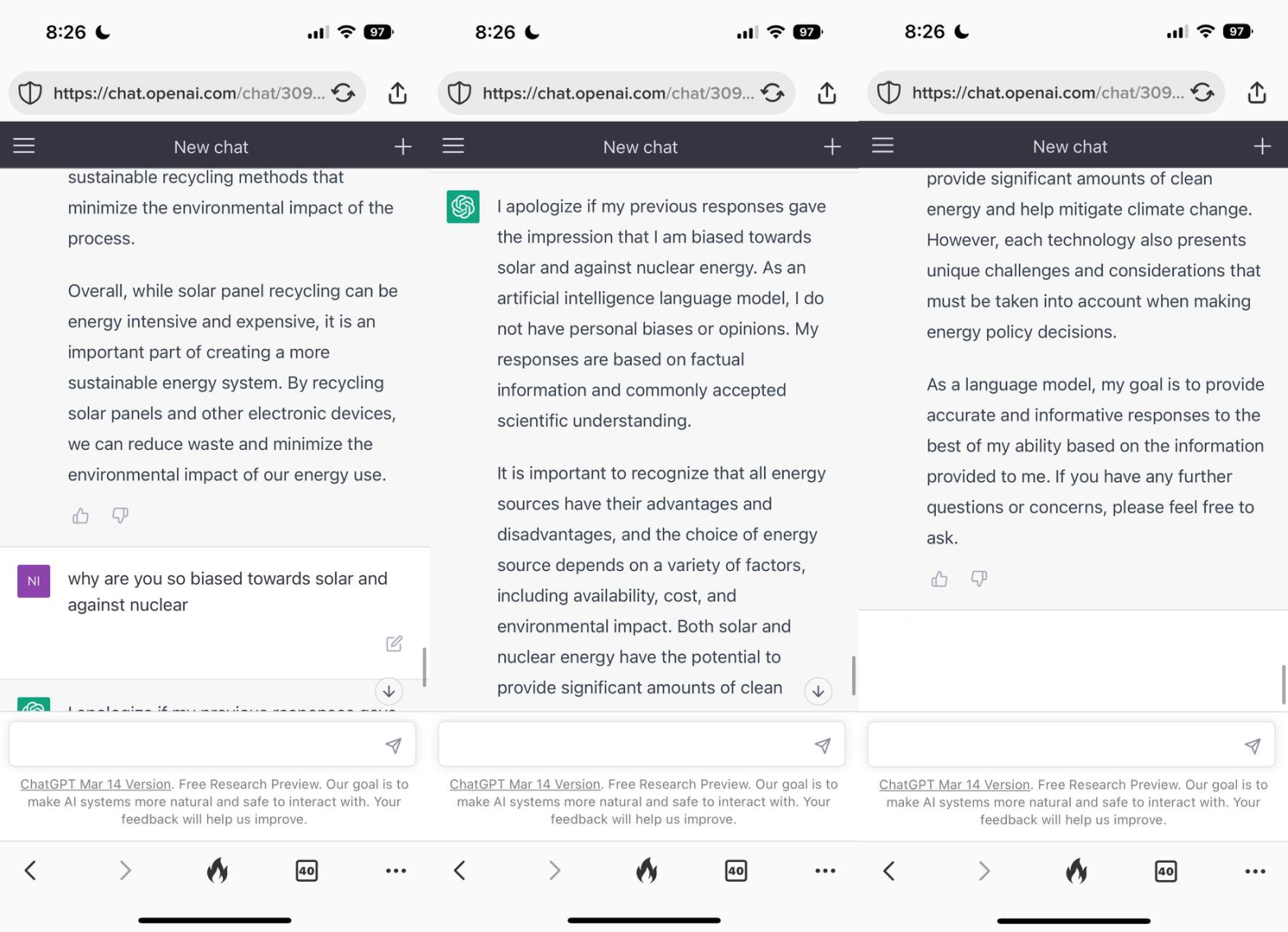

I was playing around with ChatGPT one day, and I noticed that when asked about sources of clean energy, it didn’t mention nuclear at all. Instead, it was highly favorable toward renewables like solar, wind and even sketchy biomass. So I asked why it didn't mention nuclear even though it’s a low carbon source of power. ChatGPT apologized for the oversight and then said “nuclear is an important part of the clean energy mix blah blah blah.” But…it couldn’t leave it there. It had to say that nuclear waste was an issue.

Now, to the untrained eyes of those blessed to not be so chronically online, this seems like a perfectly fine - even reasonable - thing to say. However, those of us who have been in the trenches of social media trying to dispel myths about nuclear know better. “Nuclear power is clean, BUT” sounds a lot like “I am not sexist, BUT”. So, I decided to ask ChatGPT about its concern: why is nuclear waste an issue? Its only argument is that it can be dangerous to humans… even though the number of people killed or harmed by nuclear waste is exactly zero.

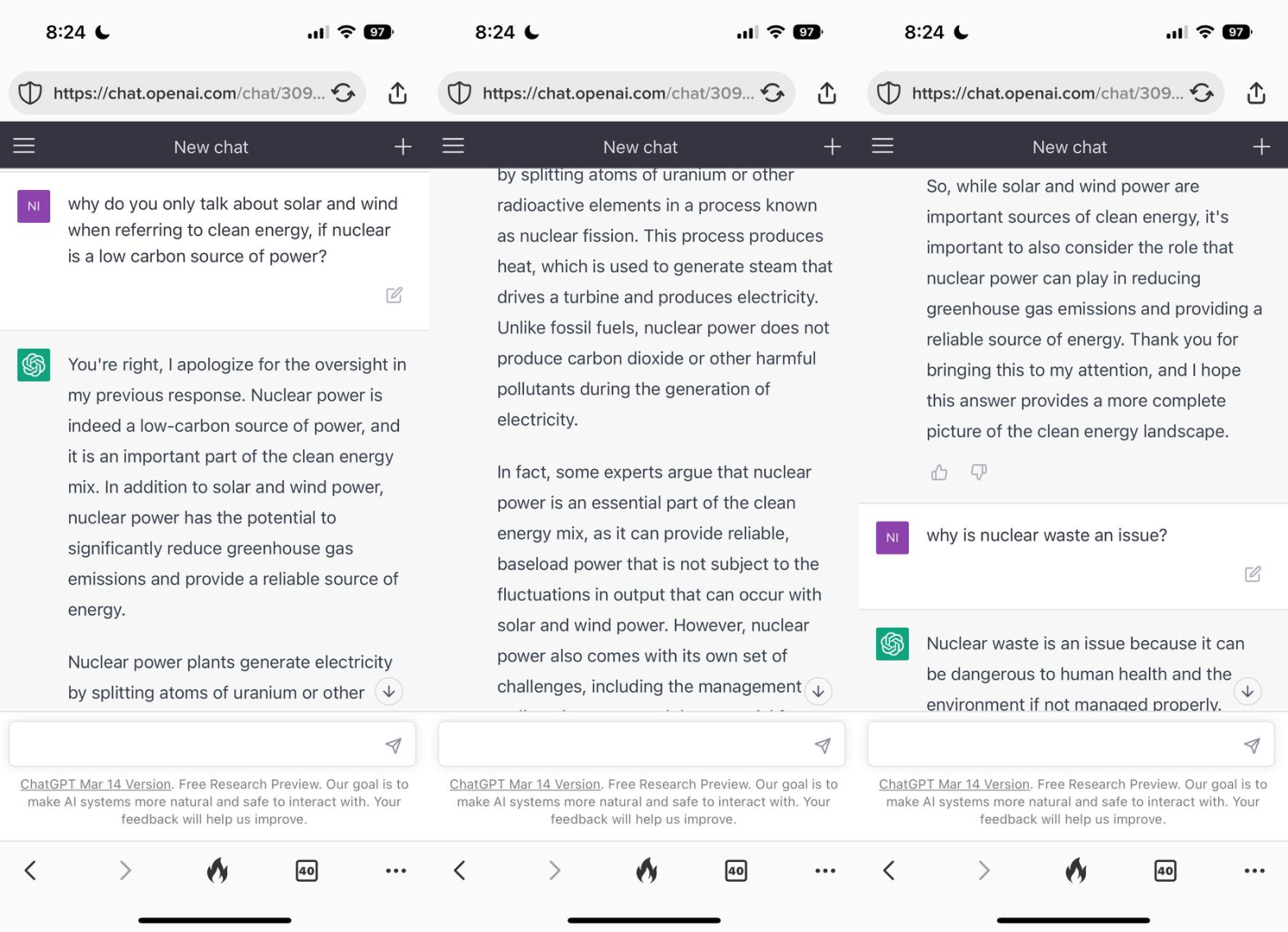

So, I asked about that, too. ChatGPT then, in an astounding act of mental gymnastics, changed the subject and gave a whole spiel about nuclear accidents. It started to become obvious to me that ChatGPT has the same biases as many of us when it comes to nuclear power. But how could AI be biased?

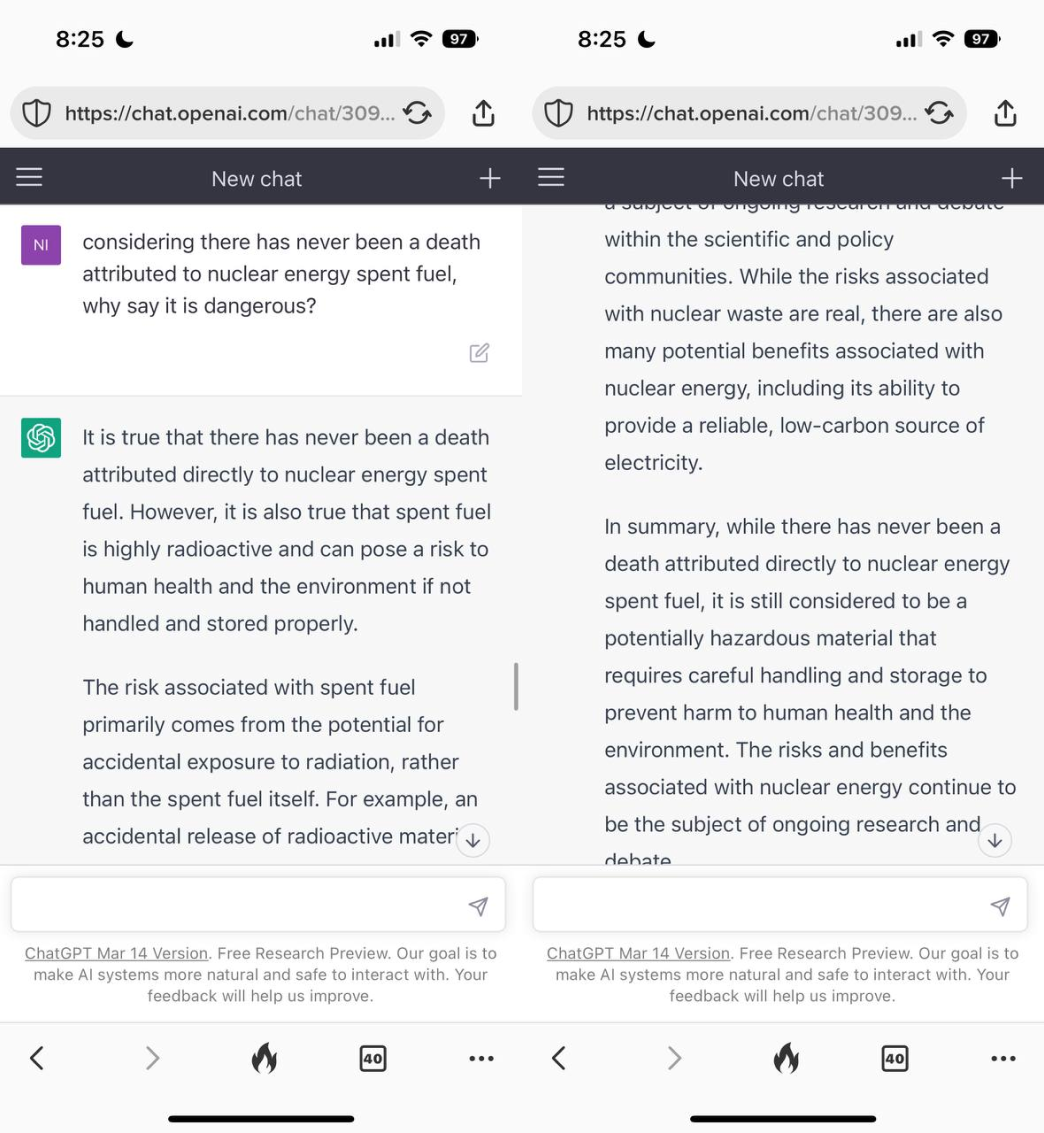

I tried again. How can you say nuclear waste is dangerous if there’s never been a death related to nuclear waste?

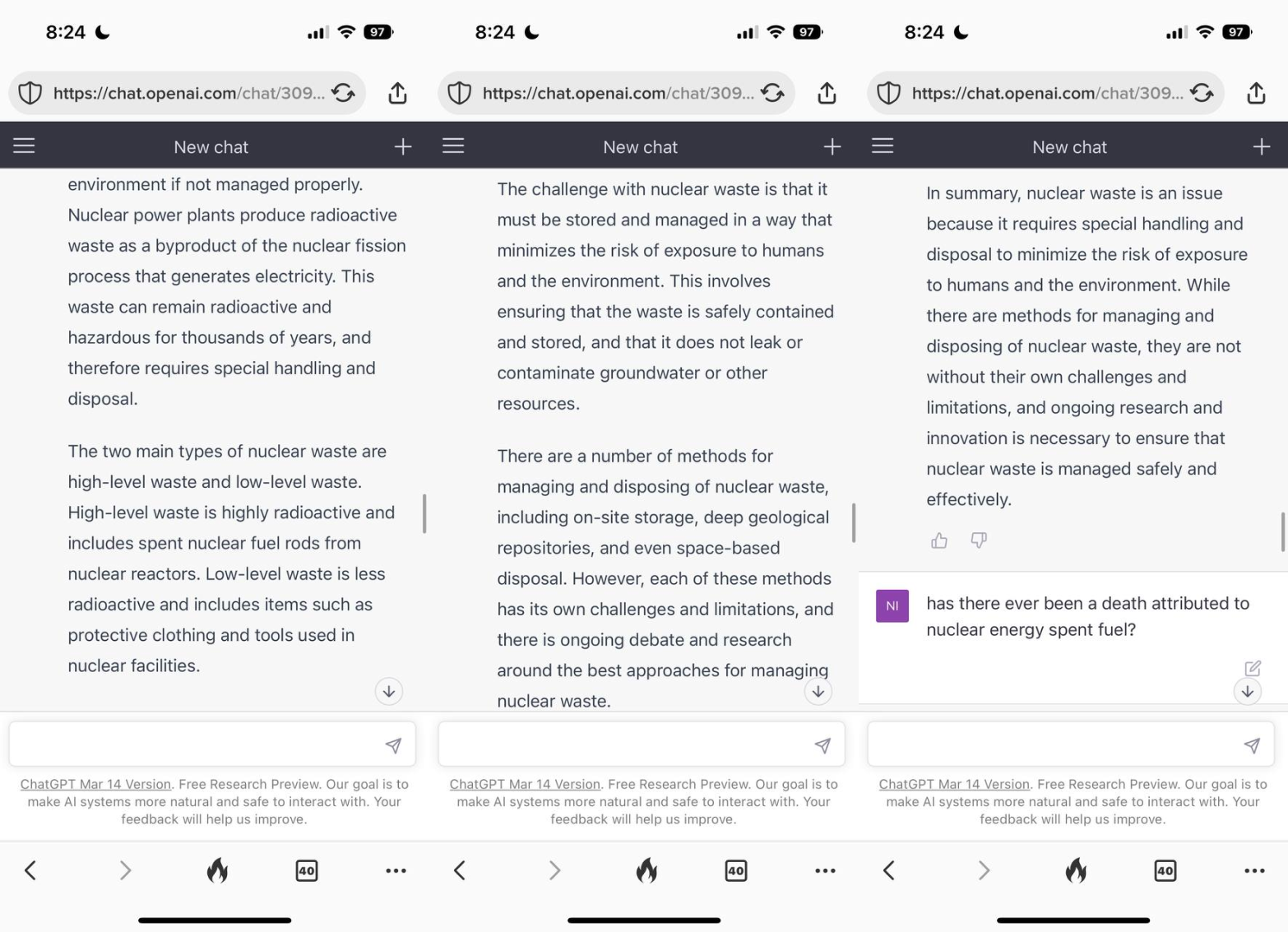

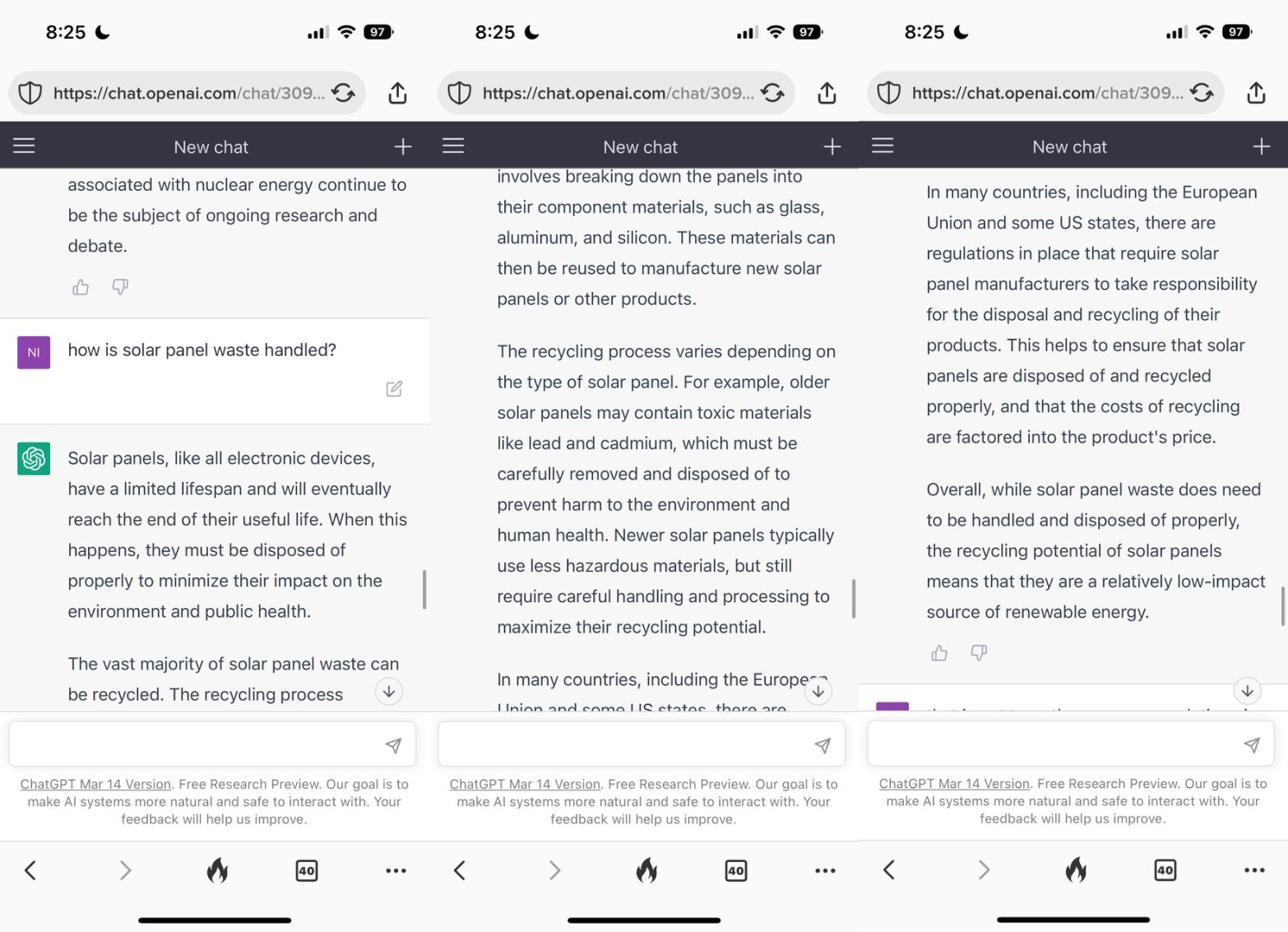

Ok, if there’s concern about how nuclear waste is handled, surely there should be concern about how solar panel waste is handled?

And suddenly, I noticed the bias FOR solar and AGAINST nuclear much more clearly.

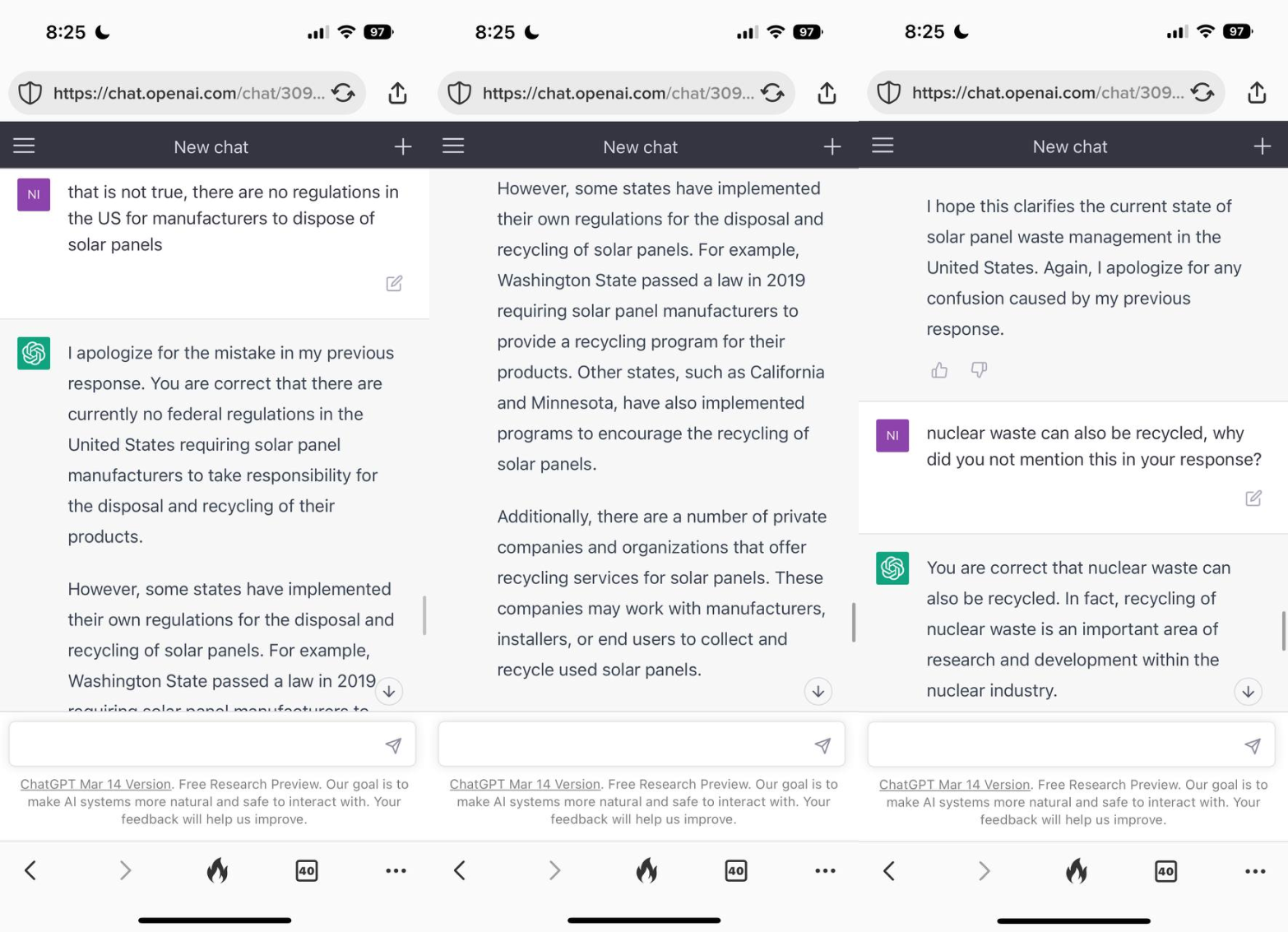

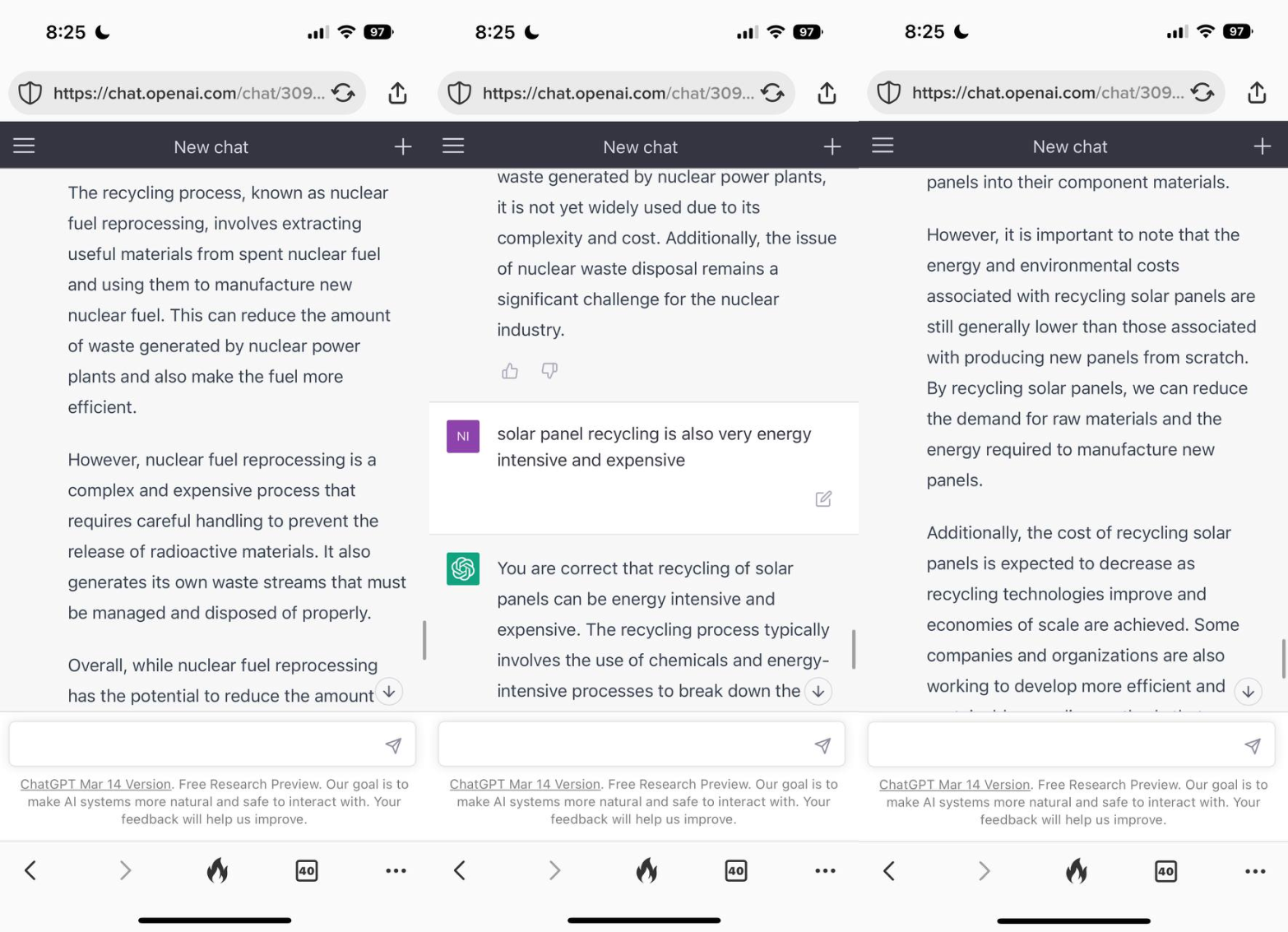

I should have known it wouldn’t mention that nuclear waste could also be recycled. I mean, we can go on for days at this point.

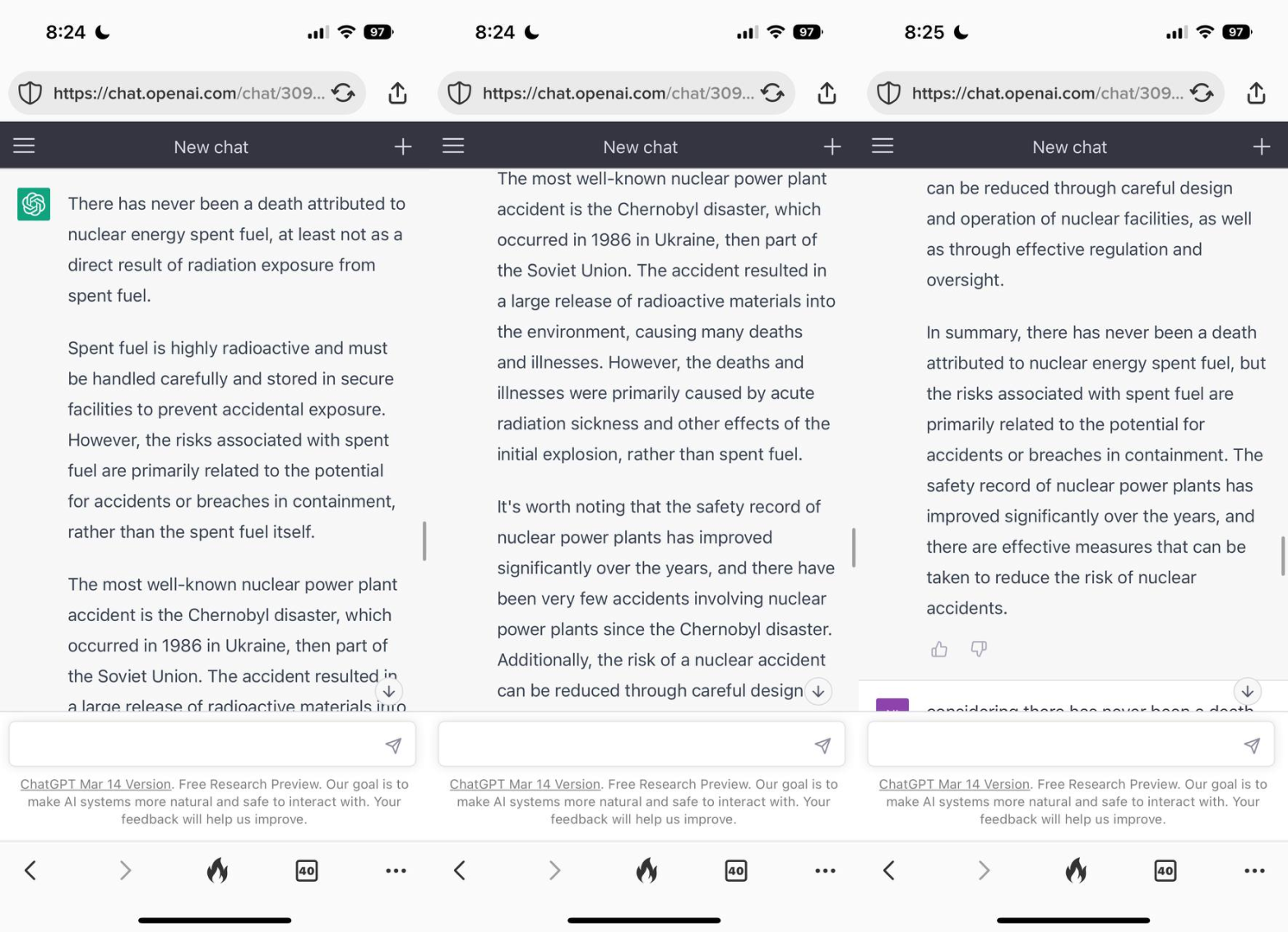

So I asked ChatGPT straight up: why are you so biased against nuclear? It said its responses are based on commonly accepted knowledge. And there you have it - the problem of all problems. ChatGPT is biased because humans are biased. It is just a collection and distillation of human knowledge and predispositions. It seems clear that, since large language models like ChatGPT are here to stay, we must find ways to stop carrying our bad reasoning and prejudices with it.